Understanding Hive and Hadoop Security (Azure)

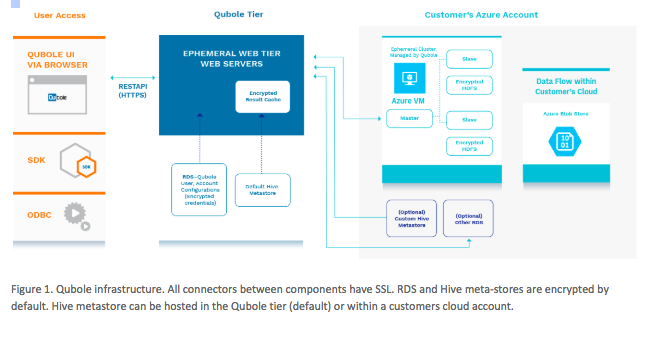

Qubole is built using open source components of Hadoop, Hive, Spark and Presto. So, by default it adopts the standard security models of each tool. However, Qubole also acknowledges that there are gaps in the default model so, it has been developing its own security model to enhance the basic security. The end result is a platform more secure than the default open source and more secure than most other commodity offerings.

Qubole is a multi-platform cloud service utilising the advanced security features of each platform. Standard security features such as Virtual Networks, security roles, secure key access, ssh and endpoint security are utilised as a default. For more details about platform security, see the Azure or AWS security pages. The data stored in the QDS servers or in the unified Hive Metastore are encrypted by default.

Hadoop Security

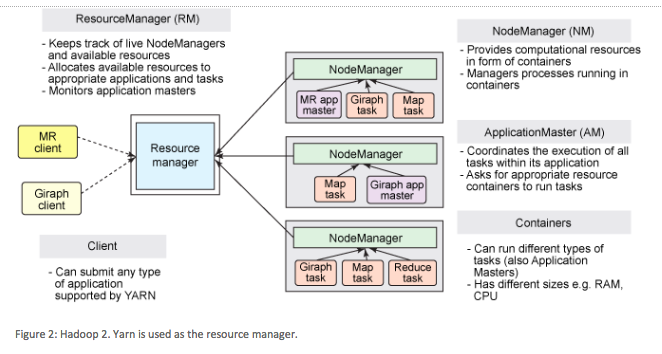

On Azure Qubole supports Hadoop 2 (YARN). YARN provides better performance than Hadoop 1 and also reduces the amount of inter-node traffic and intermediate writes and reads to external storage.

In the above figure (Figure 2), you can see that there is inter-node communication among clients, resource managers and nodes. All processing within Hadoop on Qubole happens in the Hadoop cluster inside the Virtual Network (RM, NM, AM all within the same VNet).

Data at Rest

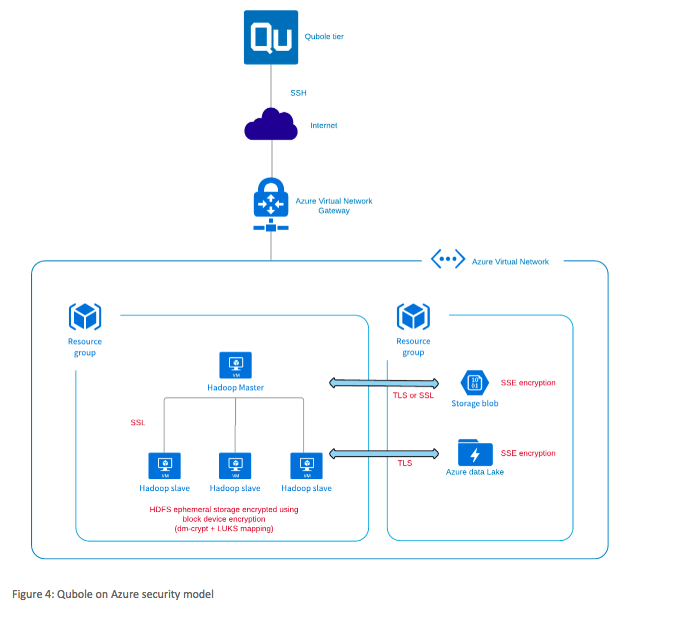

On Azure, all data at rest is encrypted by default, using Storage Service Encryption (SSE). In ephemeral HDFS storage (HDFS on the Hadoop nodes, used for disk spill and temporary storage), data is encrypted using block-device encryption (using dm-crypt + LUKS mapping). See also Understanding Data Encryption in QDS.

Data in Transit

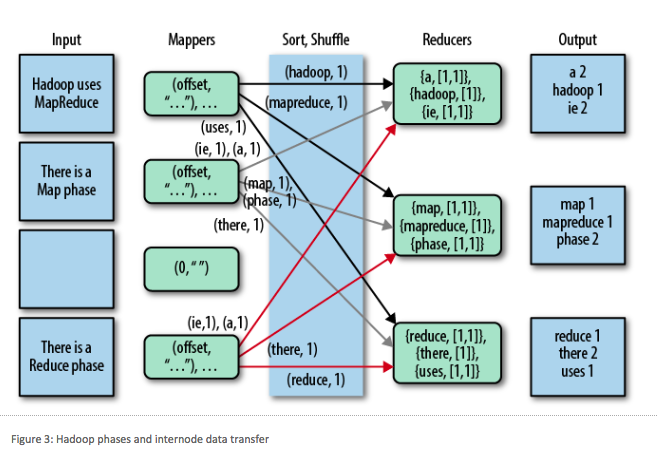

A job may have one or more shuffle, copy, sort, and reduce phases. Data in transit can be encrypted:

You can enable encryption as follows:

To enable encryption at the account level, create a Qubole Support ticket to turn on

hadoop2.use_ssl_for_azureandssl.enable_in_cluster. These two settings together enable encryption of data in transit among the cluster nodes, and between the cluster and Azure storage.Alternatively, to enable encryption at the cluster level, add

fs.azure.https.only=truein the Override Hadoop Configuration Variables section of the Clusters page, under the Advanced Configuration tab. This encrypts only data in transit between the cluster and Azure storage.

Hive Security

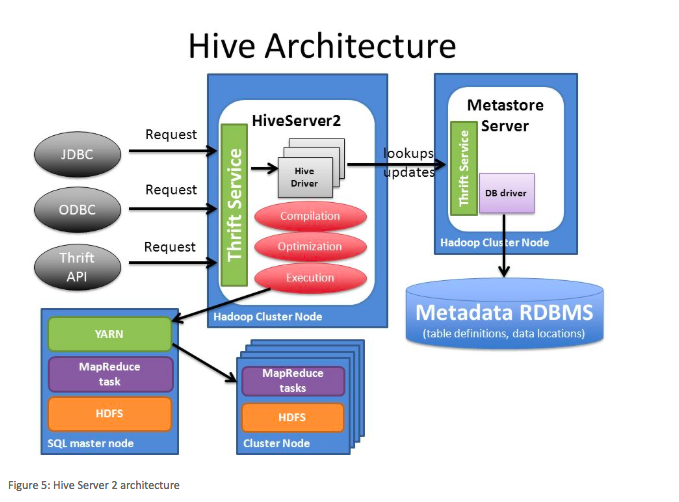

Here is a HiveServer2 architecture diagram.

Hive utilises the Hadoop security model for a query execution, so all the Hadoop security that is described above is also true for Hive queries. When utilising HiveServer2 (HS2) with Hive, users will interface with HS2 directly either through the Qubole servers (for example, QDS Analyze page) or directly through a Business Intelligence (BI) tool (ODBC/JDBC).

Communication through the QDS servers to HS2 is encrypted by default and encryption from a BI tool to HS2 is also supported. This again is an additional security feature developed by Qubole to make QDS more secure than the Hadoop default model.