Download Result as a Single File

Use our Single file result download feature to stitch large results into a single result file.

When the AWS Multipart Upload limit is insufficient to complete the job, Qubole downloads the set of files to your cluster, stitches the results, and uploads a single result file to your defloc bucket (configured through the Account Settings) along with the other result and log files.

Qubole provides you with a link to download the result file. This link is valid for 24 hrs and fails with an error upon expiry. Open the command in a new window to automatically generate a fresh download link.

Qubole recommends EBS upscaling to avoid an error scenario where the cluster runs out of local disk space while stitching results whose size exceeds the available disk space.

Note

File merging is done only once. After the merged file is available, a reference to the result is stored. Result stitching is not triggered a second time.

Setting-up

Attach the advised cluster label, maintenance_single_file_result, to an existing or new cluster.

Note

Ensure you use the exact cluster label mentioned above (the value is case-sensitive).

Update your AWS policies based on this sample policy.

For more information, see: What are some examples of policies I should use to delegate access to Qubole for my Cloud accounts?.

Note

If you are using a Dual IAM role, update the Secondary/Dual IAM role.

Policy Requirements

Policy |

Mandatory? |

Description |

|---|---|---|

s3:ListMultipartUploadParts |

Yes |

Lists and completes the process. |

s3:AbortMultipartUpload |

No |

Deletes intermediate files generated by the result stitching job and saves storage costs. Result stitching continues to work without this permission. While this policy is not mandatory, Qubole recommends you provide it. |

For more information, see: Modifying a Role and Changing Permissions for an IAM User.

Qubole recommends the following minimum cluster configuration:

Cluster Type |

Hadoop |

Coordinator Node Type |

c3.2xlarge |

Worker Node Type |

c3.large |

Minimum Worker Nodes |

1 |

Maximum Worker Nodes |

1 |

EBS Volume Count |

1 |

Enable EBS Upscaling |

true |

Maximum EBS Volume Count |

3 |

Free Space Threshold (%) |

35 |

Absolute Free Space Threshold (in GB) |

Set as 35% of EBS volume size |

Sampling Interval (in seconds) |

10 |

Sampling Window |

3 |

Troubleshooting

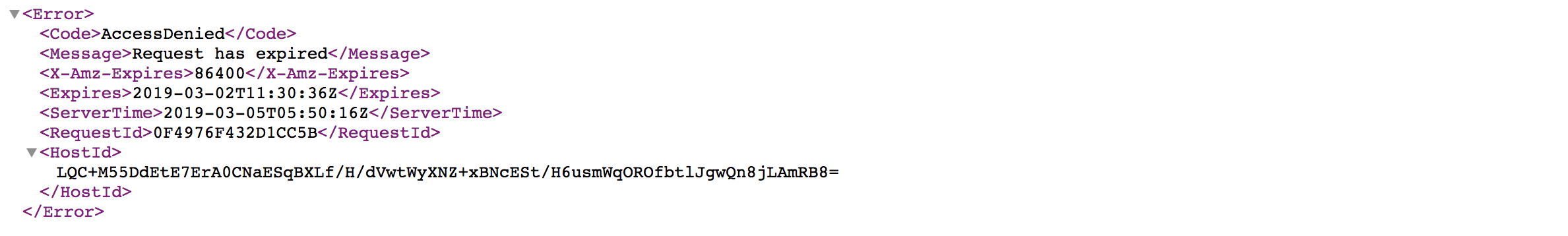

What can I do if the link to my result file expires?

Once your link has expired, you will receive the following error message.

Click Download Complete Raw Results (for the same command) to generate a fresh download link.

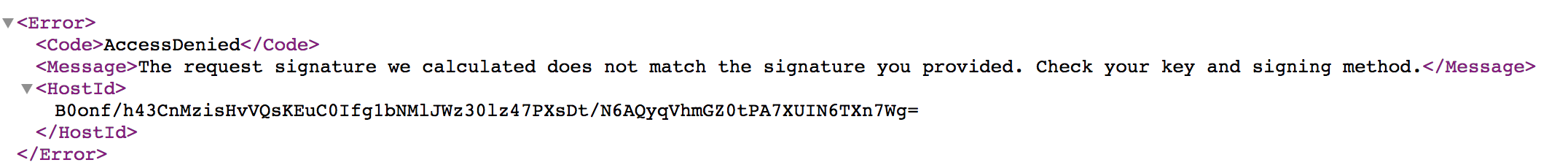

What can I do if I get an Access Denied error?

Add the s3:GetObject, s3:ListBucket permissions to your role or include the AccessKey/SecretKey that you have configured with Qubole.

What can I do if the merged result file is deleted from my S3 bucket?

Note

Qubole recommends you do not delete the result file.

If the result file is missing, contact Qubole Support.