Composing a Hive Query

Use the command composer on the Analyze page to compose a Hive query.

See Hive in Qubole for more information.

Note

Hadoop clusters support Hive queries. See Mapping of Cluster and Command Types for more information.

You can configure the Pig version on an Hadoop (Hive) cluster. Pig 0.11 is the default version. Pig 0.15 and Pig 0.17 (beta) are the other supported versions. You can also choose between MapReduce and Tez as the execution engine when you set the Pig 0.17 (beta) version.

Caution

Run only Hive DDL commands on a Presto cluster. Running Hive DML commands on a Presto cluster is not supported.

Note

Using the Supported Keyboard Shortcuts in Analyze describes the supported keyboard shortcuts.

Perform the following steps to compose a Hive query:

Navigate to the Analyze page and click Compose. Select Hive Query from the Command Type drop-down list.

Query Statement is selected by default in the drop-down list. Continue with step 3b if you choose this option.

Either:

To use a stored query, select Query Path from the drop-down, then specify the Cloud storage path that contains the Hive query file. Now skip to step 4.

Or:

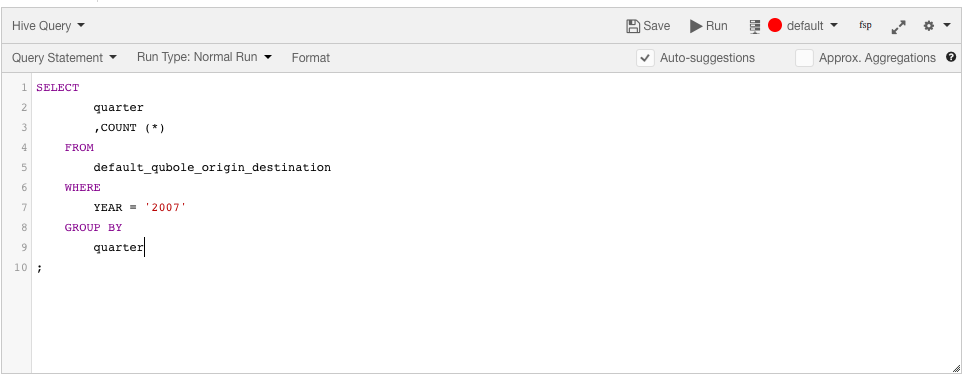

Enter the Hive query in the text field. Press Ctrl + Space in the command editor to get a list of suggestions. The following figure shows a sample query.

Select a Run Type. The default is Normal. The other options are Test Run and Constrained Run

The primary use of Test Run and Constrained Run options is during query authoring. Query authoring is often an iterative and error-prone process. When the input datasets are large, it may take minutes or hours for a query to complete and therefore, it takes a long time for you to figure out bugs in expressions and function calls. These options help you run the same query against a small subset of data to get to see some results quickly and this helps to fine-tune Hive queries.

Test Run can be used when you want to read a maximum size (in MB) of data by limiting the dataset size. The default data size to be limited is 100 MB.

Constrained Run can be used when, irrespective of the data, you want the query to run for a time range and this is done by specifying a minimum time (in minutes) and maximum time (in minutes). The default time range is 5 to 10 minutes.

Note

Use the tooltip

to know more information on each field.

Click Run to execute the query. Click Save if you want to run the same query later. (See Workspace for more information on saving queries.)

Note

The metastore consistency check (MSCK) result is displayed in the Logs tab of the Analyze page when

the configuration parameter hive.qubole.write.msck.result.to.log is enabled at the query or cluster level,

or in a Hive bootstrap. A cluster restart is required for the cluster-level setting.

You can see the result under the Results tab, and the logs under the Logs tab. For more information on how to download command results and logs and logs, see Downloading Results and Logs.

Note

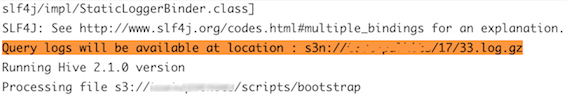

Log for a particular Hive query is available at <Default location>/cluster_inst_id/<cmd_id>.log.gz.

Viewing a Detailed Hive Log

A detailed log for each Hive query executed using HiveServer2 or Hive-on-coordinator can be uploaded to a subdirectory in the default location in Cloud storage within a couple of minutes of query completion. Detailed logs are not available by default. Create a ticket with Qubole Support to enable this capability.

Once it’s enabled, you can find the location of the logs in the Logs tab of the Analyze page.

Here is an example of the detailed log location under the Logs tab.

Viewing Multi-line Column Data in Query Results

Qubole supports newline (\n) and carriage return (\r) characters in Hive query results by escaping them in the Hive

result set and then un-escaping in the UI; this prevents any problems with the display of multi-line columns. To

enable this capability, create a ticket with Qubole Support.

Note that once it’s enabled, even a simple SELECT query requires a cluster start.