Running Spark Notebooks in a Schedule

You can create a schedule to run notebooks at periodic intervals without a manual intervention using Qubole Scheduler.( (Use the Notebooks section of the QDS UI to compose notebook paragraphs.)

Note

If your scheduled notebook runs on Zeppelin 0.8 or later versions, then all the paragraphs in the scheduled notebook are run sequentially.

Proceed as follows:

Navigate to the Scheduler page, and click the +Create button in the left pane for creating a schedule.

Note

- Press Ctrl + / to see the list of available keyboard shortcuts. For more information, see

For more information on using the QDS user interface to schedule jobs, see Qubole Scheduler.

Important

You can schedule a notebook to run even when its associated cluster is down.

Create a schedule for running a notebook from the General Tab:

Enter a name in the Schedule Name text field. This field is optional. If it is left blank, a system-generated ID is set as the schedule name.

In the Tags text field, add one or a maximum of six tags to group commands together. Tags help in identifying commands. Each tag can contain a maximum of 20 characters. It is an optional field.

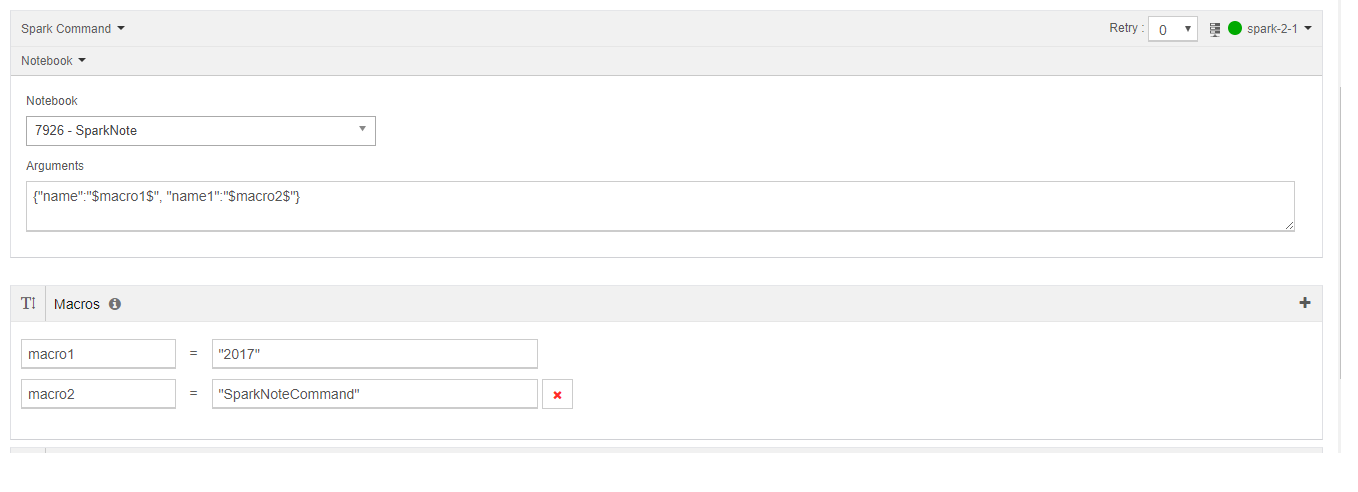

In the command field, select Spark Command from the drop-down list.

Select a cluster from the drop-down list on the right of the page.

Select Notebook from the the drop-down list that contains Scala, Python, Command Line, SQL, R. and Notebook.

A list of notebooks appears; these are the notebooks associated with the cluster you selected.

Choose the notebook that you want to schedule to run.

See Run a Spark Notebook from the Analyze Query Composer for more information.

Optionally enter arguments in the Arguments field and pass the values in the Scheduler’s macros, for example:

Now follow the steps under Creating a New Schedule.

For more information about viewing and editing a schedule, see Viewing a Schedule and Editing and Cloning a Schedule.